- Home

- Services

- About

- News

- Contact

- System recovery windows 10 lose personal files

- Why is everything so complicated song rock

- Free youtube mp3 converter app apk

- Pro landscape design software free

- Adobe creative cloud app download

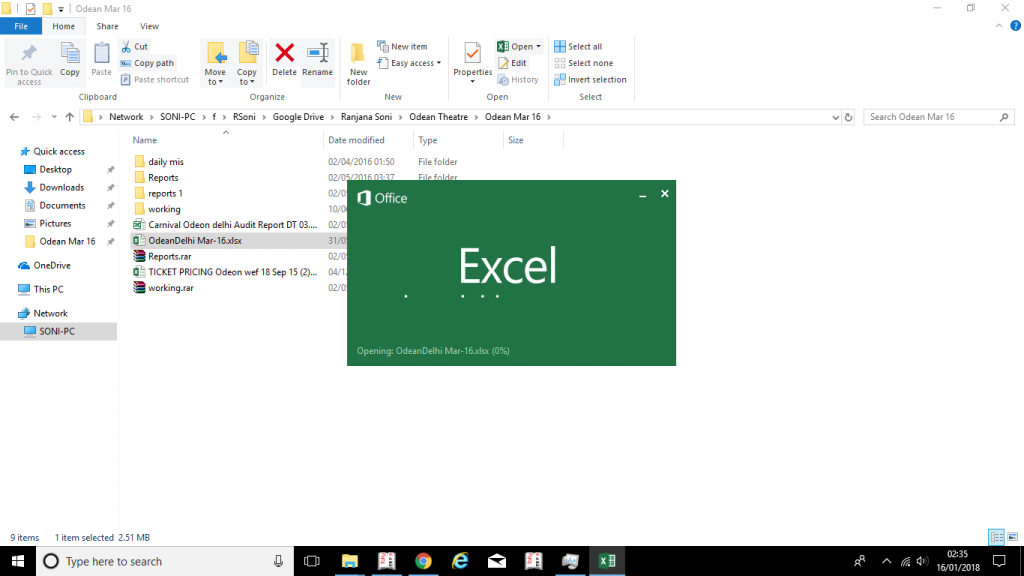

- Excel 2016 slow to open files

- Inuyasha season 3 episode list

- Super strain of head lice

- Spintires mods download

* Additional help: If after performing the above, the Word is still slow when launching or closing then restore it to its default settings by following the steps below:ī. Next time you open MS Word, a new, clean Normal Template (Normal.dot, Normal.dotm), will be created. Locate the file called " Normal.dot" if you have Word 2003, or the " Normal.dotm" file if you have Word 2007, 2010, 2013 or a later version of MS Office and delete it.

#EXCEL 2016 SLOW TO OPEN FILES WINDOWS 7#

For Windows Vista / Windows 7 /Windows 8 & Windows 10:Ģ. %USERPROFILE%\Application Data\Microsoft\Templates\ī.At the "Run command box" copy and paste one of the following commands according to your operating system version and then press OK With your MS Word application closed, go to Start > RunĢ. To solve these problems find the word templates location folder and delete the "Normal.dot" (or "Normal.dotm" if you have Word 2007/2010) file.

This tutorial fixes the following problem(s) of Word: Word is to slow to start, load documents or close. font type, font size etc.) and it is used with every word file –blank or not- you open. The " Normal.dot" template is the location where word saves all the default settings (e.g. This problem is usually due to a corrupted or problematic " Normal.dot" template. Am I understand how table.One common problem faced on many computers is the slow launching or termination of MS Word. However, I realized that when adding a step after the buffer step, the data was still pulling from the be beginning. by adding Table.Buffer step I thought the purpose is to cache the table in the memory to accelerate the data load. One other thing is it seems like for every step added on PQ, the data was retrieved from the very first step that I don't really understand why and I think that's another issue that causes the data super slow to load. I'm just so frustrated that it takes so long to combine the files every time I refresh the data model. Each file contains like over 100 columns and by using power query I'm trying to combine the data together as the files in the folder will be added on a monthly basis, then build a data model out of the flat files with 100 columns here.

I will try to create a function to connect the data to see how much of the difference it will make. I put the "Table.Skip" because for all the files i'm combining the first 4 rows were not needed to transfer to the query, also the cutsom function was used to remove all the empty columns for the files. #"Removed Duplicates" = Table.Buffer(Table.Distinct(RemoveEmpty, List.Range(Table.ColumnNames(RemoveEmpty),1,162))) RemoveEmpty = FnRemoveEmpt圜olumns(ExpandCol),ĬolName = Table.ColumnNames(RemoveEmpty), Table.ExpandTableColumn(#"Removed Columns4", "Skip first 4",unioncol)), List.Transform(#"Removed Columns4", each Table.ColumnNames(_)))),

#"Removed Other Columns" = Table.SelectColumns(Source,), Source=Folder.Files("M:\Dept\Global Interim Data Set (GIDS)"),

#EXCEL 2016 SLOW TO OPEN FILES HOW TO#

I've unchecked a few boxes in Settings to help speed up the load and read countless threads about buffer and folding but they don't seem to be applicable for me or I simply don't know how to use it.Ĭan anyone please take a look at my query and let me know how I can fix it? I'm trying to combine 10 excel files in a folder (each one is about 15 MB), when I apply changes, it took me like 4hours to refresh the query and used all of the memory and CPU!!

- Home

- Services

- About

- News

- Contact

- System recovery windows 10 lose personal files

- Why is everything so complicated song rock

- Free youtube mp3 converter app apk

- Pro landscape design software free

- Adobe creative cloud app download

- Excel 2016 slow to open files

- Inuyasha season 3 episode list

- Super strain of head lice

- Spintires mods download